Clicktale is now Contentsquare

Contentsquare X Clicktale

We've joined forces to create the global leader in digital experience analytics.

Whether you're analyzing a website or mobile app or troubleshooting performance, our combined technologies will help you bring online customer behavior to life.

More than 750 enterprise brands use Contentsquare x Clicktale to understand and optimize their digital experiences

Contentsquare unlocks customer insights for your entire digital team

It’s one platform that’s powerful enough for every digital role, from marketers to product managers to IT.

“Within just 4 months we increased annual revenue by $3.5 million, which is a huge win and one which we’d have never been able to see if it wasn’t for Contentsquare.”

Craig Harris

Head of Performance Analytics

WEB & APP ANALYSIS

Bring customer behavior to life

Do you understand the why behind your digital analytics?

Contentsquare connects metrics to actual customer behavior, combining the power of rich data, machine and human intelligence to deliver better outcomes.

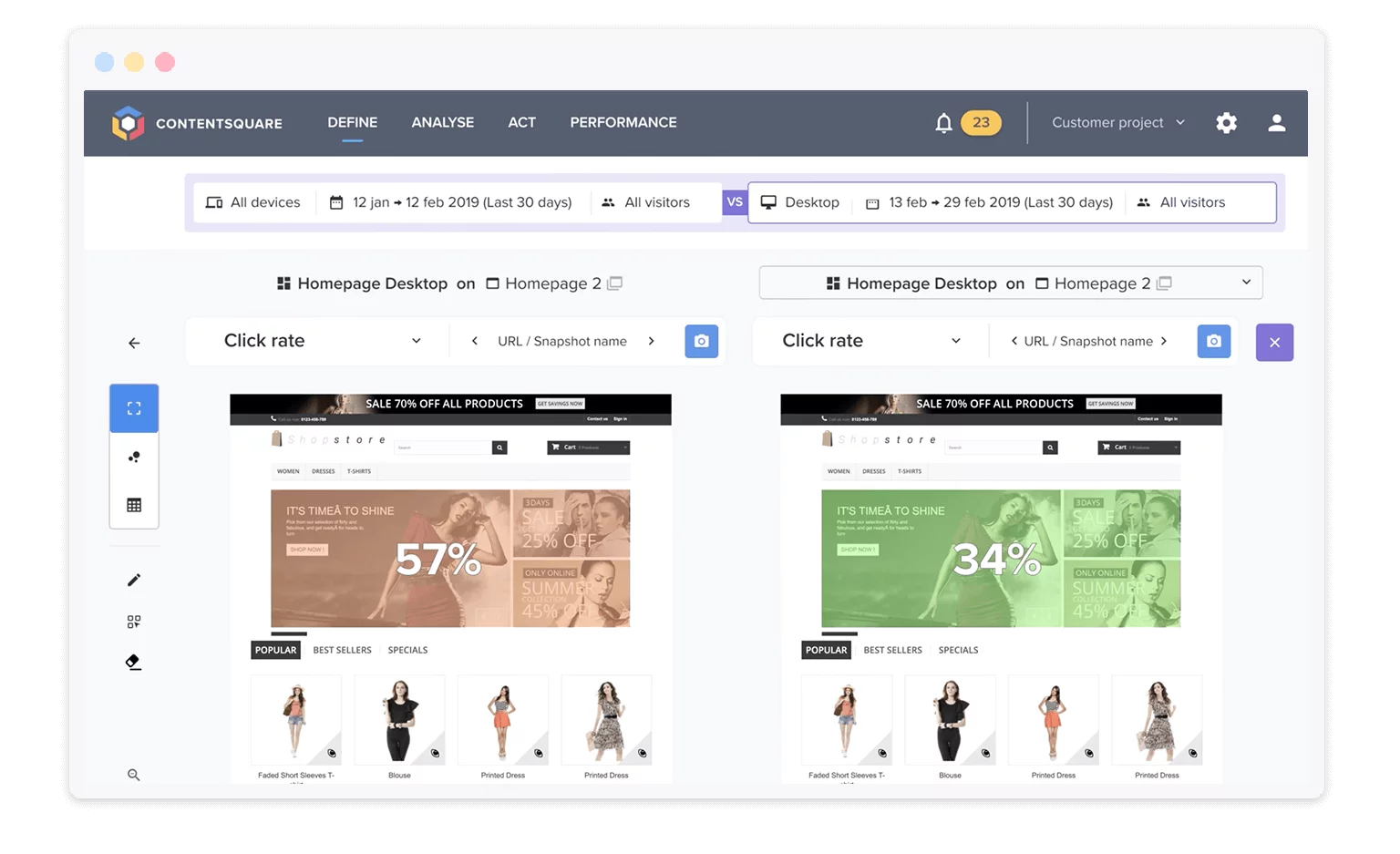

HEATMAPPING

Effortless, intuitive heatmaps

Our software takes out all the manual work: No more tagging different zones on your page.

Powerful yet intuitive heatmaps measure clicks, scroll-depth, engagement, and much more.

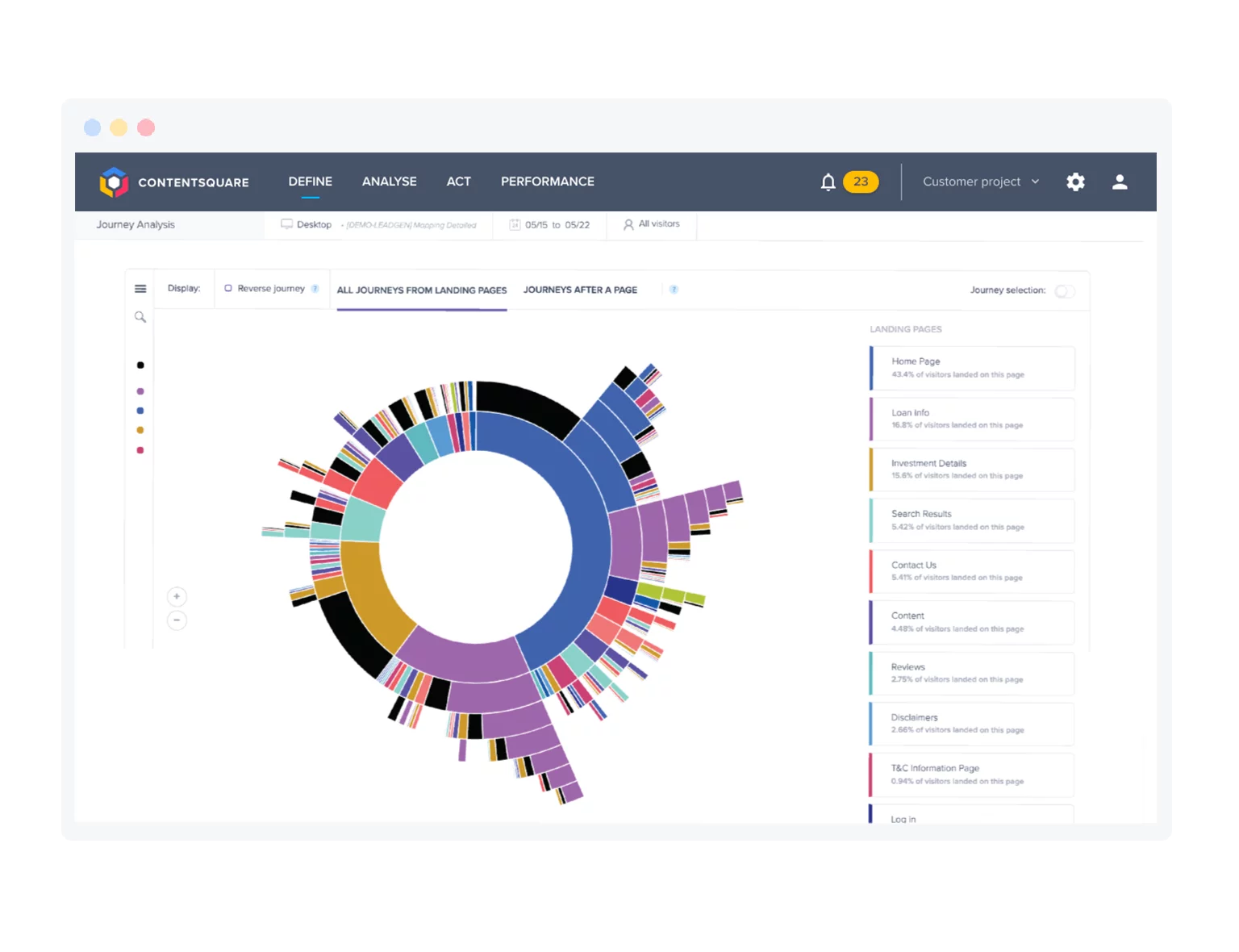

JOURNEY ANALYSIS

Identify friction and falloff points

Contentsquare's uniquely visual analysis capabilities make it easy to pinpoint friction in your user journeys.

Customer journey analytics

TROUBLESHOOTING

Find and fix performance errors

Zero in on and quantify improvements that matter most to your business and to your customers’ happiness. Understand how to improve every step in the customer journey, and use attribution to quantify and prioritize potential improvements.

![]()

PRIVACY AND SECURITY

Built for the world's most sophisticated and secure digital brands

Contentsquare is trusted by more than 30% of Fortune 500 companies, meeting the world’s most stringent security and performance requirements. Our robust architecture can automatically and cost-effectively handle large, unpredictable workloads, seasonal traffic growth and immense surges.

Our solutions are GDPR and CCPA compliant, and we have a privacy-first philosophy that informs everything we do.

Our commitment to privacy